Overview¶

This project is a collaboration between several disciplines:

- systems neuroscience & sensory psychophysics (Sergei Gepshtein at the Salk Institute for Biological Studies),

- production design and world building (Alex McDowell at USC World Building Media Lab),

- architectural and urban design (Greg Lynn at UCLA Department of Architecture and SUPRASTUDIO).

Using the computational and experimental tools developed at the Salk Institute, we conduct a series of studies at USC and UCLA. The goal is to reveal the visual organization of spaces generated by built environments.

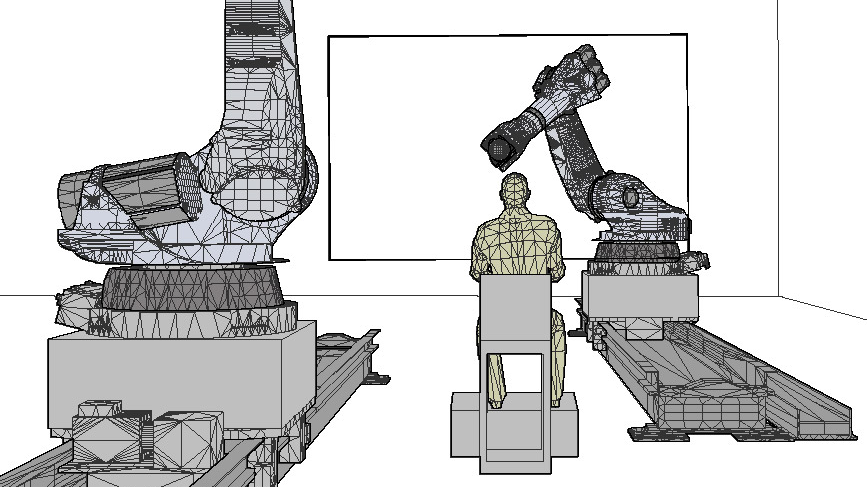

Most of these studies follow the format of psychophysical experiment. Participants view static and dynamic visual patterns displayed on large physical surfaces propelled through space by industrial robots. Using a variety of visual tasks – detection, discrimination and direct reports – we measure spatial and temporal characteristics of visual perception under multiple viewing conditions. For example, we vary viewing distances to patterns, the rate of movement in the pattern and the speed of movement of the projection screen. In every case, we map the spatial and temporal boundaries of perception in large spaces, for the forthcoming case studies in architectural and urban design, and for experiments in virtual architecture, mixed reality and other immersive media.

The project is implemented in several stages. First, methods of measurement are developed at the Salk Institute and translated to immersive media at the USC World Building Media Lab. This work is then continued at UCLA SUPRASTUDIO, where we use projection mapping on the surfaces moved by robotic arms controlled by the Bot&Dolly integrative software.